Implementing an Advanced Distribution Management System (ADMS) is a complex undertaking, and one challenge is foundational: ensuring the network model data is complete, accurate, and current. High-quality network model data, both electrical and graphical, is a vital part of an ADMS, as it enables the system to interpret, monitor, and control the distribution network. Without a reliable model, the system’s ability to manage grid operations is compromised from the outset.

One major obstacle in maintaining a high-quality network model is data fragmentation. Often, critical information needed to support ADMS functionalities is dispersed across various systems, databases, diagrams, and sometimes, even paper maps. This fragmentation of network model data across multiple independent data sources introduces significant challenges. Collecting information from various systems often requires complex extraction, transformation, and validation processes—each of which increases the risks of mismatches or incomplete data. As a result, data synchronization becomes more difficult and time-consuming, frequently leading to delays in building or updating the network model within the ADMS and dataset inconsistencies. Inaccurate or incomplete data can hinder ADMS functionalities, resulting in operational errors and posing safety hazards to utility personnel and the public. Therefore, rigorous data preparation, validation, and testing are essential stages of any ADMS implementation or upgrade.

Achieving reliable network model data requires close collaboration between data mappers, data analysts, engineers, and system operators. By aligning operational requirements with data models and system configurations, utilities can ensure their ADMS supports safe and efficient grid management. This article introduces a collaborative approach for testing and validating network model data—laying the groundwork for accurate, reliable, and high-performing ADMS operations.

To effectively leverage network model data within an ADMS, utilities must first develop a deep understanding of data standards, data sources, structure, governance, and maintenance. A network model’s effectiveness is contingent upon two key dimensions: accuracy and timeliness.

The Geographic Information System (GIS) typically serves as the primary repository for static network data such as device and asset locations, and electrical connectivity. However, ADMS demands more than just connectivity data. It requires engineering-grade electrical parameters, protection and control data, customer profiles, as well as consumption and generation patterns. Additionally, an ADMS requires the addition of dynamic, real-time insights into the device operating states and telemetry to support effective operational control and situational awareness. To meet this need, other data sources such as asset management systems, power system analysis tools, analytic and reporting systems, enrich GIS data, and collectively converge to form the single network model that ADMS requires. By combining static and dynamic data sources, a utility’s ADMS becomes a true reflection of their grid—driving more accurate modeling, better decision-making, and safer operations.

Integrating diverse data sources into one unified network model presents technical and procedural hurdles. Data elements often originate from distinct systems with varying structures, standards, and maintenance practices. For example, substation data, relay settings, Supervisory Control and Data Acquisition (SCADA) signals, Distributed Energy Resources (DERs), customer related data, generation and consumption profiles are typically maintained in separate databases or platforms. This fragmentation makes integration challenging, often necessitating manual mapping, data extraction, and the creation of links to properly align these datasets with their corresponding GIS representations. Integration requires advanced synchronization and validation mechanisms to ensure both accuracy and consistency across platforms.

Recommended integration strategies and data management practices include:

By incorporating these diverse data sources into a cohesive, high-fidelity network model, utilities can build the foundation for effective operational efficiency, situational awareness, and outage management.

The scope of network model testing should be tailored to the requirements of specific ADMS applications. Not all applications require the same breadth of data. Analytical tools such as Power Flow Analysis, State Estimation, Fault Calculation Analysis, model-based Volt/VAR Optimization (VVO), Fault Location, Isolation, and Service Restoration (FLISR), and Distributed Energy Resources Management (DERMS) require a more detailed set of network model attributes, while basic Outage Management System (OMS) may require less granularity.

While building and maintaining a comprehensive network model can be resource-intensive, the value it delivers varies by application. Testing efforts should be prioritized based on operational impact and specific application requirements of each ADMS component. This alignment is fostered through collaboration between utilities and ADMS vendors, allowing teams to identify critical data elements, define accuracy standards, and establish fallback logic for any incomplete or missing data. This approach helps streamline implementation and ensures targeted and measurable testing. Take OMS as an example: its functionality relies heavily on accurate connectivity, phasing, customer information, switching device configurations, and telemetry mappings—especially in networks with limited SCADA coverage.

Reliable OMS performance depends on several key data elements:

Inaccuracies in these areas can lead to incorrect outage predictions, misguided field dispatches, longer restoration times, higher operational costs, and reduced customer satisfaction. Customized testing frameworks allow organizations to calibrate validation depth according to integrated system complexity, maturity of network model data environment, and desired operational performance outcomes.

A well-defined, application-specific testing methodology ensures that network model data integrity supports the functional goals of the ADMS. This approach enables utilities to strengthen situational awareness, make more accurate operational decisions, enhance safety, improve field response efficiency, and increase customer satisfaction through shorter outage durations and more effective restoration workflows. Additionally, a tailored testing approach enables utilities to maintain a sustainable data governance process that can adapt and evolve as systems are enhanced and operational needs change. In essence, a targeted and dynamic testing framework elevates network model validation from a routine compliance activity to a strategic driver of operational excellence and reliability. This empowers utilities to proactively manage complexity, reduce risk, and drive continuous improvement across operations.

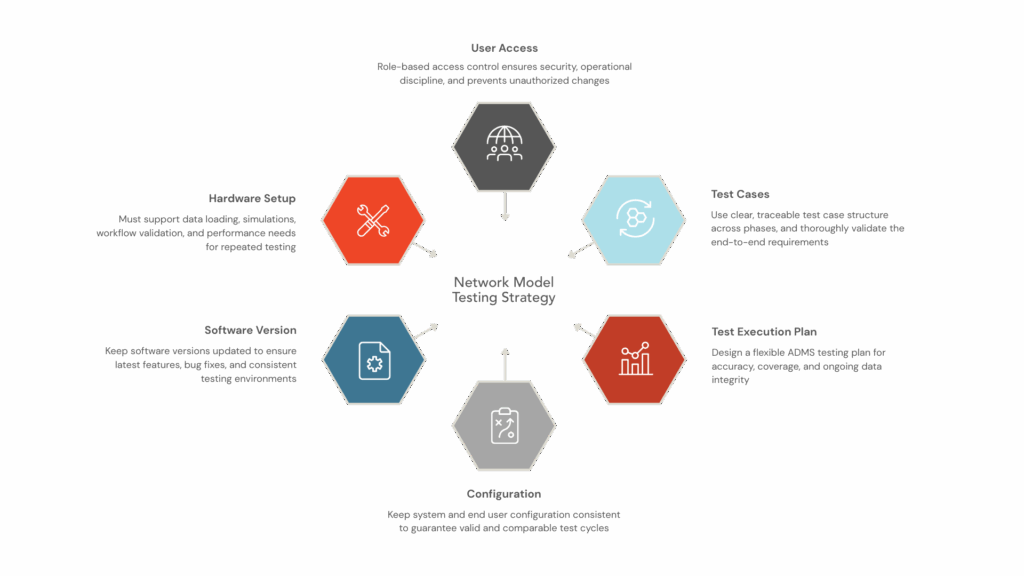

The hardware in the testing environment should be able to handle data loading, run simulations, and validate workflows, while also meeting the storage and performance needs of large datasets and repeated testing cycles. On the software side, it’s essential to keep versions up to date to ensure the latest features, bug fixes, and network models are fully integrated and validated. Ensuring that testing and future production environments use the same software versions is essential for producing valid results. Testing should verify data quality, confirm the effectiveness of cleanup and corrections made during network model validation and maintenance, and ensure the software can accurately process, display, and analyze data.

Managing user access and security is another crucial aspect. Clearly defined roles and permissions help maintain the integrity and traceability of testing activities. Access should extend to reporting tools, dashboards, and configuration systems. Organizing users into accounts and groups with well-defined permission hierarchies creates a secure and auditable testing process. Role-based access control not only enforces operational discipline but also reduces the risk of unauthorized changes to network model data or testing parameters.

Configuration management is at the heart of the testing setup. Once baseline parameters are validated and approved during model build and update verification, changes should be limited to those with explicit justification. Configuration elements include graphical settings (such as symbol size, color coding, and visibility layers), display and navigation parameters for network topology, and default operational settings for data imports, updates, and refresh cycles. Keeping configuration stable ensures that test results are consistent across cycles and that any discrepancies are due to data issues, not environmental inconsistencies.

A structured approach to test procedures and case definition brings clarity, traceability, and repeatability throughout the testing lifecycle. Each test case should include a unique ID, title, author, assignee, execution type (manual or automated), execution status (not executed, in progress, passed, failed, blocked, postponed, needs review, not applicable), a description of the test objective, preconditions, and detailed test steps with expected outcomes. This structure supports consistent execution across testers and project phases, reinforcing the reliability of the validation process.

Once the environment is set up—data sources identified, ADMS integration established, modeling approach agreed upon, and scope defined -the next step is to develop a testing strategy tailored to the ADMS implementation. For initial deployments, testing focuses on validating the network model against trusted sources like GIS and SCADA, ensuring the new model accurately reflects the physical network and operational state. When replacing legacy systems, the emphasis shifts to comparing the ADMS network model with the legacy system’s representation to ensure accurate migration and functional equivalence.

Given the complexity of distribution networks, it’s often impractical to test every model element exhaustively. A more practical approach is to test a defined percentage of data based on device and model types, ensuring broad and meaningful coverage. Utilities can also test representative samples—such as one feeder from a batch of imported feeders—to validate the entire group. This sampling balances thorough validation with resource constraints.

Data comparison methods include:

Finally, regression and repeat testing are critical for maintaining data integrity over time. Testing should be repeated whenever devices are added or removed, source data is corrected, or software updates affect data processing or visualization. The testing approach should remain flexible, adapting to the unique characteristics of each ADMS implementation and the specific attributes of a utility’s network model. Continuous refinement based on operational experience, new requirements, and technological advances is key to sustaining effective testing practices.

Preparing network model data effectively is essential for building, testing, and maintaining a successful ADMS network model. This process requires a strong commitment to several key activities that together ensure operational integrity and reliability. It starts with a thorough assessment of technical and operational requirements, making sure the network model data aligns with both the goals of ADMS implementation and the broader needs of utility operations. Next comes a detailed analysis, where time and resources are dedicated to evaluating existing data sources and their quality, supporting transparent and accurate decision-making.

Careful data mapping is needed to convert network model data into ADMS-compatible formats, taking into account the capabilities of source systems and business needs. Equally important is establishing robust business processes for model creation, validation, and ongoing workflow improvement. Utilities should prioritize comprehensive testing of critical network model elements and commit the necessary resources to this effort. Ongoing maintenance is also vital, ensuring data quality through timely error correction, regular updates, and synchronization with source systems.

The accuracy of a utility’s network model directly affects the precision and reliability of ADMS functionalities and ADMS-driven operational actions. By investing in rigorous data preparation, validation, and close teamwork, utilities can transform their ADMS network model into a trusted foundation for real-time decision-making. Ultimately, these investments go beyond improving system performance—they equip and empower organizations to operate with confidence, optimize processes, and ensure a more resilient, reliable grid for the future.